Table of Contents

How to extract information from a website?

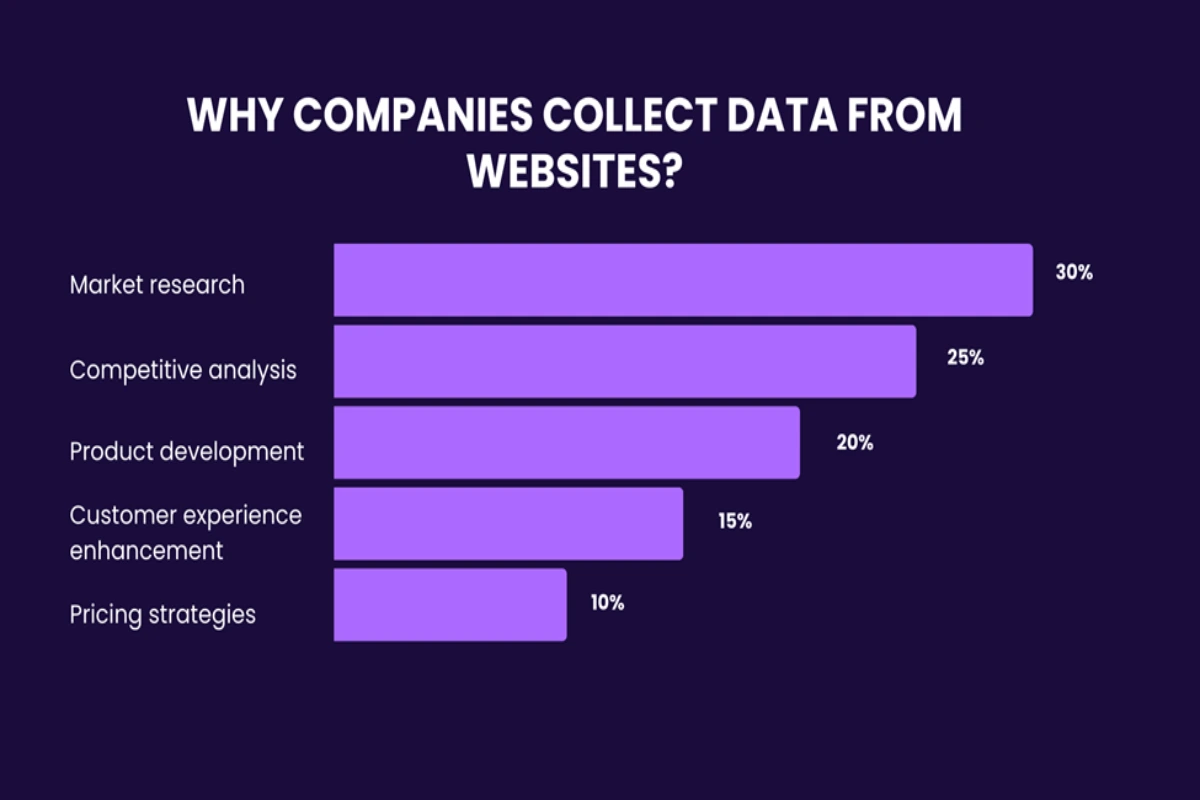

Source: Why do companies scrape data from website

The web is filled with all sorts of info. From the latest trends on social media and discussions in forums. To listings on real estate portals and e-commerce websites. And these days, many businesses rely heavily on this online data. Because it helps them stay informed, competitive, and connected to what their customers want. But the question is how to get that information.

In this article, we’ll walk you through some neat ways to extract data from website.

How businesses benefit from extracting information from websites

Generally speaking, website data is the backbone of a data-driven strategy. Still, companies need it for different purposes.

A hefty 30% of data collection efforts are dedicated to market research. It means studying the current state of the particular niche, customer demand, offerings, etc. Not far behind, a solid 25% of businesses extract data from websites to keep a close eye on their rivals. Product development takes a strategic 20% slice of the pie. Here, businesses collect feedback and market demands. 15% of data extraction endeavors target improving customer experience. While 10% of organizations use collected information to adjust their pricing strategies.

Different ways of extracting data from websites

1. Manually

You’ve probably applied the manual approach to extract info from web pages. It boils down to this. You navigate to the desired website, locate the information, and manually copy it into a document or spreadsheet. By far, this is the simplest way to get data from websites. But does it always work?

Sure, this approach is great for collecting a limited set of data points from a small number of web pages. For example, you may extract contact details from a directory or product prices for a comparison.

But let’s be honest—manually extracting data from the web is impractical for large-scale projects. Or the ones that require data regularly.

2. Buy a ready dataset

This is a transactional approach to data collection. To extract data from a web page, you would typically identify and reach out to vendors or online marketplaces selling datasets. Then, you’ll get your data delivered in a common format (CSV, Excel, or via an API).

It’s most suitable when you need access to a large volume of standardized data that doesn’t change frequently. It’s also effective when such data is required quickly.

But if you require highly specialized data or the one that’s constantly changing (like real-time stock prices), pre-packaged datasets may not meet your needs.

3. Outsource data extraction service

How to data scrape a website if you require unique data? Delegate this task to an external service provider.

As you extract data from a website with the outsourcing model, you usually seal a contract with a vendor. You agree on the scope of work and your specific data requirements. The service provider then uses their resources to get the data.

It proves to be effective when you need complex data from highly-protected websites. Or when you require large-scale data extraction that is beyond the capability of manual methods or off-the-shelf datasets.

If you have highly sensitive or strict confidentiality policy, you may not be satisfied with this approach. So, you may be considering the next method for extracting website data.

4. Assemble in-house team

With this route, you hire staff with the relevant expertise in data extraction. This team is going to be in charge of assembling the infrastructure, developing and maintaining the necessary web scraping tool.

Extracting text from web pages with this approach is most effective if data is core to your business operations. For example, if you run a background check company. But it may not be suitable for smaller businesses or short-term projects due to the significant investment in recruitment, training, and infrastructure.

5. Use APIs or browser extensions

Want to take a hands-on approach without much coding? You may want to use APIs or browser extensions to get data from webpages.

Many platforms offer APIs to access their data in a controlled way. For example, you may be allowed to get particular data points. Or make a limited number of requests. Twitter, Instagram, YouTube, Reddit, and Twilio have APIs. You’ll need a tech background to set up these APIs. But in the long run, you’ll get data in a structured format, which will significantly simplify the processing stage.

Meanwhile, browser extensions are add-ons for your web browser. They usually have user-friendly interfaces, so you won’t need technical skills.

As you decide to grab data from website using APIs and browser extensions, be mindful that:

- Not all websites offer APIs

- There may be limitations on the amount and frequency of data you can extract

- May not offer the same level of depth or customization

Conclusion

Each method of data extraction from websites carries its unique advantages and challenges. So, if you want to collect information successfully, start with defining your business needs first. And then, align those demands with the most suitable method. For this, consider the scale of data, the frequency of updates, the level of specificity, and the resources at your disposal.